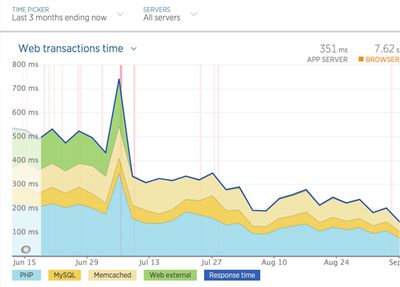

This past summer we started seeing a higher frequency of alerts from New Relic for one of our clients. Although we are constantly working on improving performance, we were perplexed by what could be causing the sudden onslaught of warnings. It turns out that the problem stemmed not from the application itself, but from our client’s files system.

Moving in the right direction.

The application is a high-traffic publishing site running on Drupal 7, with many editors and writers uploading thousands of images per month. This means that unless the application’s file system is pre-emptively saving those images into distributed subfolders, the root of the files/ directory will become flooded over time which, if unchecked, leads invariably to severe performance issues. The details of the why are below.

Too Many Files == Performance Implications

Our client’s infrastructure is hosted by Acquia, which provides a lot of best practice documentation. For sites on their cloud platform, Acquia asserts that “over 2500 files in any single directory in the files structure can seriously impact a server's performance and potentially its stability”[1] and advises customers to split files into subfolders to avoid the problem. This is a long-standing issue for Drupal 7 that is still being hashed out. Drupal 8 takes care of files distribution by default.

Migration Scripts to the Rescue

When we realized the client’s files/ directory was pushing 50,000 files, we were on a mission to move their existing and future files into subfolders. Luckily for us, Acquia developed a drush script that works out of the box to move a site’s files into distributed dated subfolders.

Anatomy of a Files Directory Vivisection

Our first run of the Acquia script shaved the files/ root directory down to about 10,000 files. This was a great start, but we wanted to get the number of files down to well below 2500 per Acquia’s recommendation. We deduced that the 10,000 remaining files leftover after the initial run of the drush script were mostly unmanaged files referenced by hard-coded image and anchor tags written into body fields, as well as legacy files from the previous migration that were missing file extensions.

Further investigation revealed another similar problem. During a previous content migration from a legacy CMS into Drupal, approximately 60,000 images were saved to a single subfolder (in our case called migrated-images) that all had the same timestamp - the date of the migration. By default the Acquia script creates subfolders by year/month/date as needed and moves files into them based on their timestamp. When we ran the drush script again on migrated-images, we found that all the files were indeed moved but they were all moved into a single dated subfolder 2017/07/21 - the date we ran the Acquia script. So we were back to square one.

Files, Subfolders, and Triage

So now we had two more problems to solve:

- How to shave down the root

files/directory which still had ~10k files in it after the first run of the Acquia script. - How to reduce the file counts of the subfolder

migrated-imageswhich had ~60k images all with the same timestamp.

To tackle the problem of the remaining ~10k files in the root files/ directory, we ran a series of update hooks to do the following:

- Create nodes from the images to get them into the managed files table so Drupal knows about them (in our use case, media is added as nodes - photos, videos, etc).

- Update the hard-coded references in WYSIWYG body fields to their new file paths.

- Move the legacy files with missing extensions into manageable subfolders.

Shout out to my clever colleague who wrote this hook_update and a bash script to deal with the remaining ~10k files. After running all these updates, we got our files count in the root files/ folder down to a few hundred. Phew.

To re-distribute the ~60k+ images that all landed in a subfolder from a previous migration, we refactored the drush script. It creates a parent folder with a variable number of child subfolders into which the source directory files are evenly distributed. The refactored script takes an integer as an argument to determine the number of child subfolders that should be created, as well as a source directory option which is relative to the files/ directory.

drush @site.prod migration_filepath_custom 100 --source=migrated-imagesRunning the command above took all the files in the source directory migrated-images and saved them into a parent directory evenly distributing them between 100 subfolders. The naming convention for the newly created directory structure looks like this inside files/:

migrated-images (source directory)

migrated-images_parent

|__ migrated-images_1

|__ migrated-images_2

|__ migrated-images_3

…

|__ migrated-images_100So when the refactored script finished, migrated-images (which originally held ~60k files) was empty, migrated-images_parent contained 100 subfolders whose directory names were differentiated by _N, and each migrated-images_N folder held approximately 600 images. Score!

The Future Depends on the Decisions Made Now

The other vital piece to resolving the too-many-files-in-a-single-directory problem was how to save new files going forward. Acquia recommends some contributed modules to address this problem. One such module we tried was File (Field) Paths. At first it seemed like a good simple solution - set the relative file paths in the configuration options and we were good to go. But we soon discovered that it tanked when using bulk uploading functionality. With the amount of media the content editors generate, maintaining zippy bulk uploading of assets was essential. The bulk uploader running slower than molasses was a deal-breaker.

After more research into the issues around this in core, we opted to patch core. As of this writing, the latest proposed core patch moves files into dated subfolders using the following tokens:

'file_directory' => '[date:custom:Y]-[date:custom:m]'For our client’s site, it’s entirely possible that in any given month, there could be well over 2500 files uploaded into the Drupal files system. So to get around this issue, we applied an alternate patch to save files using the following tokens to add a day folder:

'file_directory' => '[date:custom:Y]/[date:custom:m]/[date:custom:d]'The bulk uploading of files ran swiftly with this solution! So now legacy, as well as future files, all have a place in the Drupal files system that will no longer threaten the root files/ directory and, most importantly, the application’s performance.

After hustling to make all these changes, we’re delighted to report that the application has been running smoothly. It was a formidable lesson in files management and application performance optimization that I’m relieved will not be an issue in future iterations of Drupal.

References:

- https://acquia.my.site.com/s/article/360005373314-Proactively-organizing-files-in-subfolders

- https://acquia.my.site.com/s/article/360005434833-Optimizing-file-paths-Organizing-files-in-subfolders

- https://www.drupal.org/node/2128055

Footnotes

Roadmap Your Drupal 7 Transition

We’re offering free 45 minute working sessions to help you assess your organizations level of risk, roadmap your transition plan, and identify viable options!

Drop us a note, and we’ll reach out to schedule a time.